Chaos execution plane

Chaos Execution Plane contains the components responsible for orchestrating the chaos injection in the target resources. They get installed in either an external target cluster if an external chaos infrastructure is being used or in the host cluster containing the control plane if a self chaos infrastructure is being used. It can be further segregated into Litmus Chaos Infrastructure components and Litmus Backend Execution Infrastructure components.

Litmus Execution Plane Components

Litmus Chaos Infrastructure components help facilitate the chaos injection, manage chaos observability, and enable chaos automation for target resources. These components include:

Workflow Controller: The Argo Workflow Controller responsible for the creation of Chaos Experiments using the Chaos Experiment CR.

Subscriber: Serves as the link between the Chaos Execution Plane and the Control Plane. It has a few distinct responsibilities such as performing health check of all the components in Chaos Execution Plane, creation of a Chaos Experiment CR from a Chaos Experiment template, watching for Chaos Experiment events during its execution, and sending the chaos experiment result to the Control Plane.

Event Tracker: An optional component that is capable of triggering automated chaos experiment runs based on a set of defined conditions for any given resources in the cluster. It is a controller that manages EventTrackerPolicy CR, which is basically the set of defined conditions that is validated by Event Tracker. If the current state of the tracked resources match with the state defined in the EventTrackerPolicy CR, the chaos experiment run run gets triggered. This feature can only be used if GitOps is enabled.

Chaos Exporter: An optional component that facilitates external observability in Litmus by exporting the chaos metrics generated during the chaos injection as time-series data to the Prometheus DB for its processing and analysis.

Litmus Backend Execution Infrastructure components orchestrate the execution of Chaos Experiment in target resources. These components include:

Chaos Experiment CR: Refers to the Argo Workflow CR which describes the steps that are executed as a part of the chaos experiment. It is used to define failures during a certain workload condition (such as, say, percentage load), multiple (parallel) failures of dependent and independent services etc.

ChaosExperiment CR: Used for defining the low-level execution information for any Litmus chaos fault as well as to store the various fault tunables.

ChaosEngine CR: Used to hold information about how the chaos faults are executed. It connects an application instance with one or more chaos faults while allowing the users to specify run-level details.

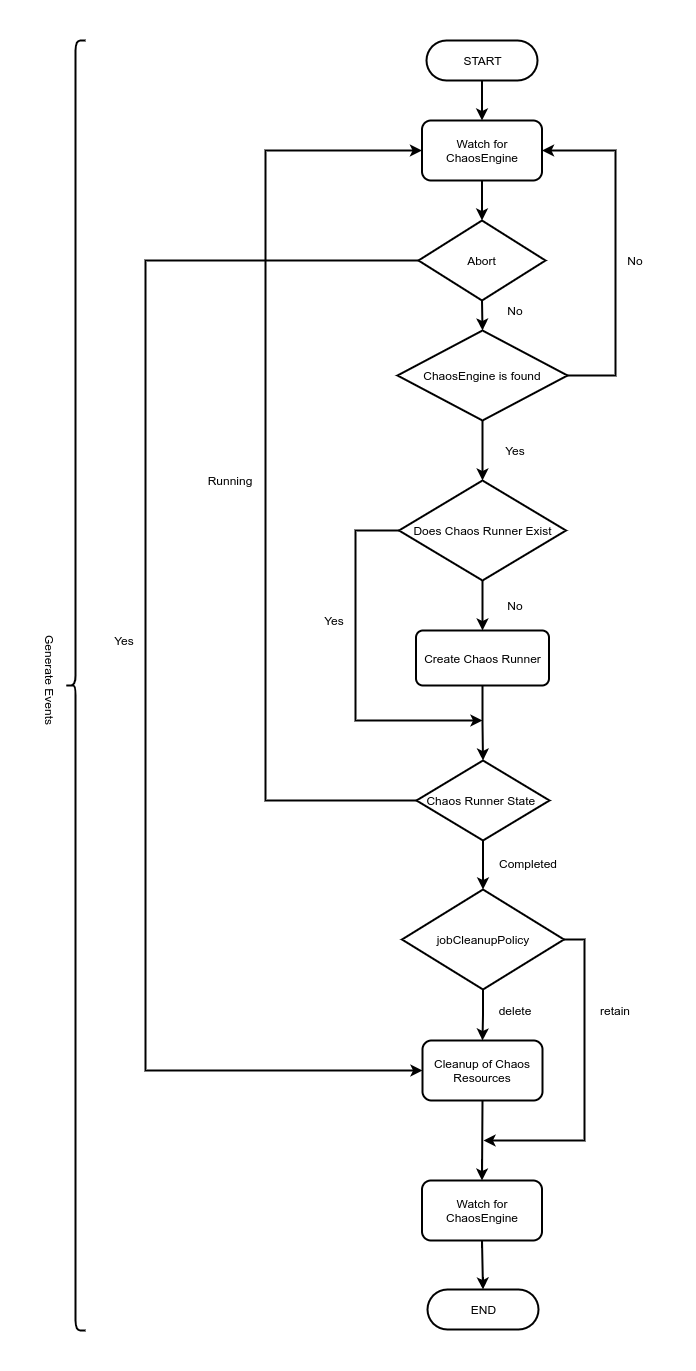

Chaos Operator: A Kubernetes custom-controller that manages the lifecycle of certain resources or applications intending to validate their "desired state". It helps reconcile the state of the ChaosEngine by performing specific actions upon CRUD of the ChaosEngine. It also defines a secondary resource (the ChaosEngine Runner pod), which is created & managed by it to implement the reconcile functions.

ChaosResult CR: Holds the results of a chaos fault, such as ChaosEngine reference, Fault State, Verdict of the fault (on completion), salient application/result attributes. It also acts as a source for metrics collection for observability.

Chaos Runner: Acts as a bridge between the Chaos Operator and Chaos Faults. It is a lifecycle manager for the chaos faults that creates Fault Jobs for the execution of fault business logic and monitors the fault pods (jobs) until completion.

- Fault Jobs: Refers to the pods that execute the fault logic. One fault pod is created per chaos fault in the chaos experiment.

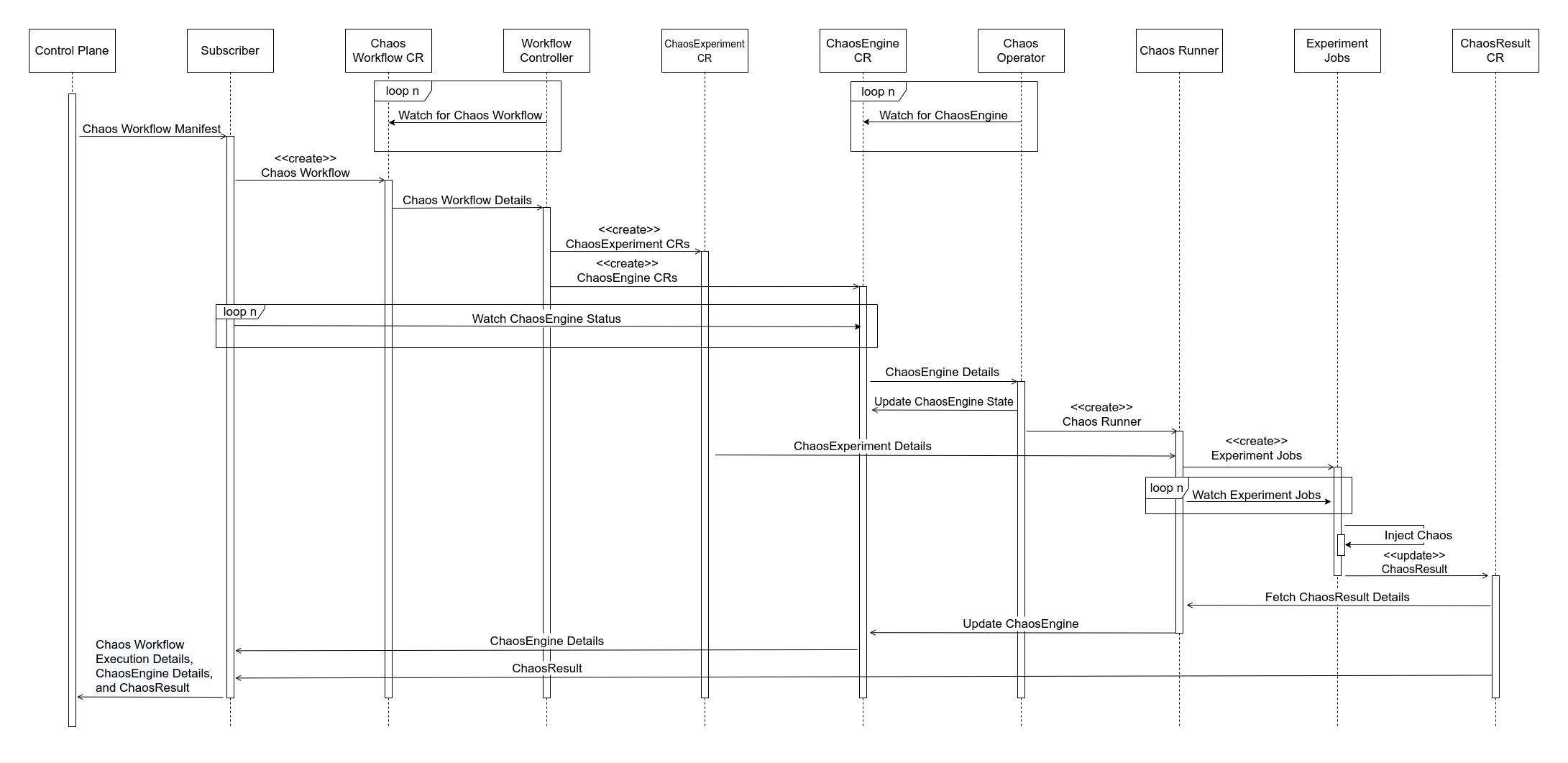

Standard Chaos Execution Plane Flow

- Subscriber receives the Chaos Experiment manifest from the Control Plane and applies the manifest to create a Chaos Experiment CR.

- Chaos Experiment CRs are tracked by the Argo Workflow Controller. When the Workflow Controller finds a new Chaos Experiment CR, it creates the ChaosExperiment(Chaos Fault) CRs and the ChaosEngine CRs for the chaos faults that are a part of the chaos experiment.

- ChaosEngine CRs are tracked by the Chaos Operator. Once a ChaosEngine CR is ready, the Chaos Operator updates the ChaosEngine state to reflect that the particular ChaosEngine is now being executed.

- For each ChaosEngine resource, a Chaos Runner is created by the Chaos Operator.

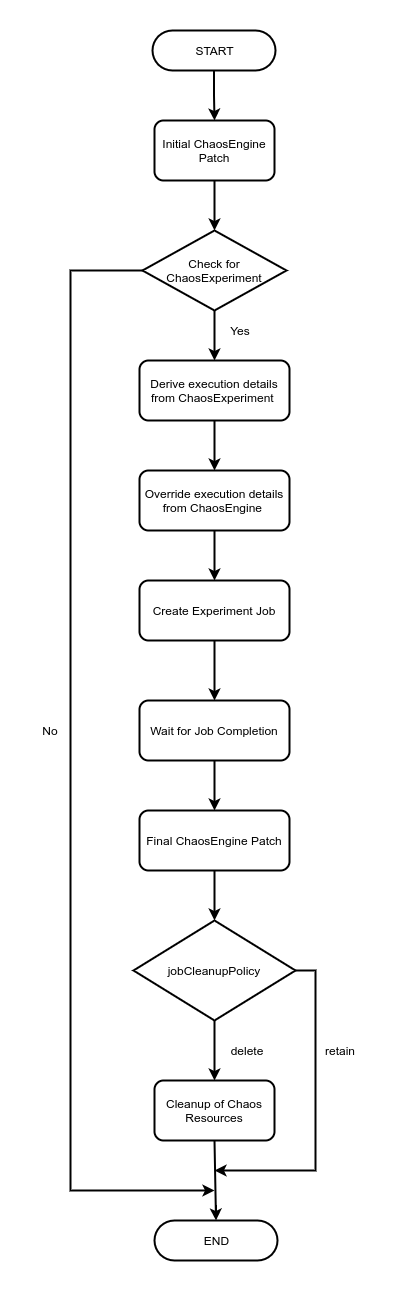

- Chaos Runner firstly reads the chaos parameters from the ChaosExperiment(Chaos fault) CR and overrides them with values from the ChaosEngine CR. It then constructs the Fault Jobs and monitors them until their completion.

- Fault Jobs execute the fault business logic and undertake chaos injection on target resources. Once done, the ChaosResult is updated with the fault verdict.

- Chaos Runner then fetches the updated ChaosResult and updates the ChaosEngine status as well as the verdict.

- Once the ChaosEngine is updated, Subscriber fetches the ChaosEngine details and the ChaosResult and forwards them to Chaos Control Plane.

It is worth noticing that:

If configured, Chaos Exporter fetches data from the ChaosResult CR and converts it in a time-series format to be consumed by the Prometheus DB.

An Event Tracker Policy can also be set up as part of the Backend GitOps, where the Backend GitOps Controller tracks a set of specified resources in the target cluster for any change. If any of the tracked resources undergo any change and their resulting state matches the state defined in the Event Tracker Policy, then a pre-defined Chaos Experiment is executed.

note

With the latest release of LitmusChaos 3.0.0:

- The term Chaos Delegate/Agent has been changed to Chaos Infrastructure.

- The term Chaos Experiment has been changed to Chaos Fault.

- The term Chaos Scenario/Workflow has been changed to Chaos Experiment.

Refer to the Glossary doc for more info.